Frontend Test Automation Strategy: Optimizing Unit, E2E, and API Tests with Jest, Playwright, and MSW

- jest

- typescript

- vite

- vitest

- testinglibrary

- storybook

- playwright

- cypress

- expressjs

- graphql

- github

- prisma

- sonarqube

This post is also available in .

Introduction

In software development, test automation is a key factor in improving development speed while maintaining quality. However, introducing and operating tests comes with many challenges, such as “How far should we test?”, “What are the right tools?”, and “What is the optimal way to run tests in CI/CD?”

This article systematically explains test strategies in modern frontend and backend development, including automation of unit tests, component tests, E2E tests, and API tests. It also covers a wide range of practical approaches, from organizing and optimizing mock data, optimizing test execution flows in CI/CD, and visualizing test coverage, to test design that takes feature flags into account.

I hope this article will serve as a useful guide for those considering introducing or strengthening tests, or those who want to make their current test strategy more effective.

Introducing and Strengthening Unit Tests (Jest / Vitest)

Importance of Unit Tests

Unit tests are an important technique for verifying the behavior of small units such as functions and classes. The main benefits are as follows:

- Early bug detection

By testing individual features, you can quickly identify issues. - Automation of regression tests

When code changes, you can ensure that existing features continue to work correctly. - Code documentation

By reading test cases, the intent of functions and classes becomes clear. - Faster development

Reduces the effort of manual testing and makes integration with Continuous Integration (CI) easier.

Unit Testing with Jest

Jest is a major testing framework for JavaScript/TypeScript.

- Main features

- Intuitive to write with a simple API

- Rich snapshot testing and mocking features

- Works well with TypeScript

Introducing Jest (for TypeScript projects)

npm install --save-dev jest ts-jest @types/jest

Then create jest.config.ts to configure Jest for TypeScript.

import { Config } from "jest";

const config: Config = {

preset: "ts-jest",

testEnvironment: "node",

};

export default config;

Unit Testing with Jest

- Unit test for a function

For example, suppose you implement a sum function in math.ts.

export function sum(a: number, b: number): number {

return a + b;

}

To test this sum function, create math.test.ts.

import { sum } from "./math";

test("sum correctly adds two numbers", () => {

expect(sum(1, 2)).toBe(3);

});

To run Jest, use the following command:

npx jest

- Unit test for a class

You can also easily test classes. For example, create a Counter class.

export class Counter {

private count = 0;

increment() {

this.count++;

}

decrement() {

this.count--;

}

getCount(): number {

return this.count;

}

}

Create a test for this class.

import { Counter } from "./counter";

test("Counter increments and decrements correctly", () => {

const counter = new Counter();

counter.increment();

expect(counter.getCount()).toBe(1);

counter.decrement();

expect(counter.getCount()).toBe(0);

});

- Testing asynchronous processing

You can also test asynchronous functions using async/await.

export async function fetchData(): Promise<string> {

return new Promise((resolve) => {

setTimeout(() => resolve("Hello World"), 1000);

});

}

import { fetchData } from "./fetchData";

test("fetchData returns expected value", async () => {

const data = await fetchData();

expect(data).toBe("Hello World");

});

Unit Testing with Vitest

Vitest is an ultra-fast test framework optimized for Vite.

It has an API similar to Jest and offers excellent TypeScript support.

Introducing Vitest (for TypeScript projects)

To use Vitest, install the following package:

npm install --save-dev vitest

Create the configuration file vite.config.ts.

import { defineConfig } from "vitest/config";

export default defineConfig({

test: {

globals: true,

environment: "node",

},

});

Unit Testing with Vitest

With Vitest, you can write tests in almost the same way as Jest.

- Testing a function

import { describe, it, expect } from "vitest";

import { sum } from "./math";

describe("sum function", () => {

it("correctly adds two numbers", () => {

expect(sum(1, 2)).toBe(3);

});

});

- Testing a class

import { describe, it, expect } from "vitest";

import { Counter } from "./counter";

describe("Counter class", () => {

it("increments and decrements correctly", () => {

const counter = new Counter();

counter.increment();

expect(counter.getCount()).toBe(1);

counter.decrement();

expect(counter.getCount()).toBe(0);

});

});

- Testing asynchronous processing

import { describe, it, expect } from "vitest";

import { fetchData } from "./fetchData";

describe("fetchData function", () => {

it("returns expected value", async () => {

const data = await fetchData();

expect(data).toBe("Hello World");

});

});

To run Vitest, use the following command:

npx vitest

Jest vs Vitest Comparison

| Feature | Jest | Vitest |

|---|---|---|

| Execution speed | Relatively slow | Very fast (Vite-based) |

| API compatibility | Standard test framework | Largely compatible with Jest API |

| TypeScript support | Good | Excellent (leverages Vite’s TS support) |

| Async tests | Supports async/await | Supports async/await |

| Compatibility with Vite | No direct support | Optimized |

Which should you choose?

If you are using Vite, Vitest is the basic choice; otherwise, Jest is.

- Jest is suitable for projects not using Vite or environments already familiar with traditional Jest.

- Vitest is suitable for Vite-based projects or environments that require fast tests.

There is also a blog post on how to introduce Jest, so please refer to it as well.

Optimizing Component Tests (React Testing Library / Storybook)

This section explains in detail how to optimize React component tests by using React Testing Library (RTL) and Storybook.

Component Testing with React Testing Library (RTL)

React Testing Library (RTL) is a testing framework that focuses on mimicking real user interactions.

It is used for unit and integration tests and enables user-centric testing.

Introducing RTL

First, install the required packages.

npm install --save-dev @testing-library/react @testing-library/jest-dom

@testing-library/react: Core library for testing React components@testing-library/jest-dom: Provides custom matchers such astoBeInTheDocument()

Basic Test Example

import { render, screen } from "@testing-library/react";

import userEvent from "@testing-library/user-event";

import Button from "../components/Button";

test("Button click triggers event", async () => {

const onClick = jest.fn();

render(<Button onClick={onClick}>Click me</Button>);

await userEvent.click(screen.getByText("Click me"));

expect(onClick).toHaveBeenCalledTimes(1);

});

- Key points

render(<Button onClick={onClick}>Click me</Button>)- Use

render()to render the component into a virtual DOM

- Use

screen.getByText("Click me")- Get elements via screen

getByText()finds the element by its button textawait userEvent.click(...)

userEvent.click()simulates a click event- Adding

awaitproperly waits for async processing

- Adding

expect(onClick).toHaveBeenCalledTimes(1);- Use

jest.fn()to verify how many timesonClickwas called

- Use

Commonly Used Queries

RTL recommends using accessibility-focused queries.

| Query | Usage | Example |

|---|---|---|

| getByRole | Get elements with a role such as button or heading | screen.getByRole('button', { name: 'Submit' }) |

| getByLabelText | Get elements associated with a <label> |

screen.getByLabelText('Email') |

| getByText | Get elements with specified text | screen.getByText('Hello, world!') |

| getByTestId | Get elements with a data-testid attribute | screen.getByTestId('custom-element') |

Example: Testing a labeled input field

import { render, screen } from "@testing-library/react";

import userEvent from "@testing-library/user-event";

import LoginForm from "../components/LoginForm";

test("test for input form", async () => {

render(<LoginForm />);

const emailInput = screen.getByLabelText("Email");

await userEvent.type(emailInput, "test@example.com");

expect(emailInput).toHaveValue("test@example.com");

});

Optimizing Tests

- Use

beforeEach/afterEachto consolidate common processing - Use

jest.spyOn()to spy on functions - Use

waitForto properly wait for asynchronous processing

Example)

The DataComponent component uses fetchData() to fetch data and uses useEffect to fetch and display data when rendered. It is a simple component.

import { useEffect, useState } from "react";

import { fetchData } from "../utils/api";

const DataComponent = () => {

const [data, setData] = useState<string | null>(null);

const [loading, setLoading] = useState(true);

const [error, setError] = useState<string | null>(null);

useEffect(() => {

const loadData = async () => {

try {

const response = await fetchData();

setData(response.data);

} catch (err) {

setError("Failed to load data");

} finally {

setLoading(false);

}

};

loadData();

}, []);

if (loading) {

return <p>Loading...</p>;

}

if (error) {

return <p>{error}</p>;

}

return <p>{data}</p>;

};

export default DataComponent;

Flow of behavior

| State | Displayed text |

|---|---|

| While fetching data | Loading... |

| On success | Hello, World! (depends on API response) |

| On error | "Failed to load data" |

The test code for this component is as follows:

import { render, screen, waitFor } from "@testing-library/react";

import { fetchData } from "../utils/api";

import DataComponent from "../components/DataComponent";

jest.mock("../utils/api"); // Mock the API

describe("DataComponent", () => {

beforeEach(() => {

jest.clearAllMocks(); // Reset mocks before each test

});

test("fetches data from API and displays it", async () => {

fetchData.mockResolvedValueOnce({ data: "Hello, World!" });

render(<DataComponent />);

// Initially, "Loading..." is displayed

expect(screen.getByText("Loading...")).toBeInTheDocument();

// After async processing completes, confirm that "Hello, World!" is displayed

await waitFor(() => expect(screen.getByText("Hello, World!")).toBeInTheDocument());

// Confirm that "Loading..." has disappeared

expect(screen.queryByText("Loading...")).not.toBeInTheDocument();

});

test("displays an error message when the API returns an error", async () => {

fetchData.mockRejectedValueOnce(new Error("Failed to fetch"));

render(<DataComponent />);

expect(screen.getByText("Loading...")).toBeInTheDocument();

// After async processing completes, confirm that "Failed to load data" is displayed

await waitFor(() => expect(screen.getByText("Failed to load data")).toBeInTheDocument());

// Confirm that "Loading..." has disappeared

expect(screen.queryByText("Loading...")).not.toBeInTheDocument();

});

});

Key points

- Tests are grouped with describe.

- Related tests are organized and made cleaner.

- Added jest.clearAllMocks() in beforeEach

- Resets mock state between tests to ensure test independence

- Added error handling test

- By also testing behavior when fetchData fails, the code becomes more robust

There is also a blog post on how to introduce Testing Library / React, so please refer to it as well.

Integration with Storybook

Storybook is a tool that catalogs UI components and allows you to check their behavior in isolation during development. It is particularly useful for checking design and implementation and component behavior, and can also be used for visual regression tests and UI interaction tests.

Introducing Storybook

To add Storybook to your project, run the following command:

npx storybook init

Running this command automatically generates Storybook configuration files and sample stories in your project.

It also installs the necessary dependencies.

- A

stories/directory is created, and sample componentStoryfiles are provided storybook/main.jsandstorybook/preview.jsare generated as configuration files

Starting Storybook

npm run storybook

or

yarn storybook

When executed, Storybook opens at localhost:6006.

Creating a Basic Story

In Storybook, you create a “Story” for each component to manage its states and variations.

import { Button } from "../components/Button";

export default {

title: "Button",

component: Button,

};

export const Default = () => <Button>Click me</Button>;

Structure of a Story

title: Specifies the category of the Story (for "Button", the Story appears under theButtoncategory)component: The component used in the Storyexport const Default: The default Story (here, the default state of theButtoncomponent)

Once you create this Story, the Default version of Button will appear in Storybook, and you can manually check its behavior such as clicking.

Visual Testing with Storybook

With Storybook, you can perform visual tests to check for unintended changes when the UI of a component is modified by using @storybook/addon-interactions.

Installation

npm install @storybook/addon-storyshots @storybook/react

Configuration

import initStoryshots from "@storybook/addon-storyshots";

initStoryshots();

How Storyshots works

- When Jest runs

storyshots.test.ts, it creates snapshots of all Storybook stories .snapfiles are saved in the__snapshots__folder and compared on the next test run to check for changes- If there are UI changes, Jest detects the differences and the test fails (if the change is intentional, update the snapshot with

jest --updateSnapshot)

Interaction Tests

Storybook can also perform simple UI interaction tests using @storybook/addon-interactions.

import { within, userEvent, screen } from '@storybook/testing-library';

import { expect } from '@storybook/jest';

import { Button } from "../components/Button";

export default {

title: "Button",

component: Button,

};

export const Clickable = () => <Button onClick={() => alert("Clicked!")}>Click me</Button>;

Clickable.play = async ({ canvasElement }) => {

const canvas = within(canvasElement);

// Explicitly test that "Click me" exists before clicking

expect(await screen.findByText("Click me")).toBeInTheDocument();

// Simulate a click action

await userEvent.click(canvas.getByText("Click me"));

};

Interaction tests using the play function

- By defining a

playfunction, you can simulate interactions (clicks, input, etc.) in the story - Use

within(canvasElement)to get the DOM of the Story and applyuserEventto it - Trigger click events and verify that the behavior is as intended

This allows you not only to try UI operations in Storybook, but also to incorporate UI behavior as automated tests.

Using Storybook Addons

Storybook has various addons. Here are some representative ones:

@storybook/addon-essentialscontrols: Provides a UI to manipulate props in Storybookactions: Logs when component events (such as onClick) are fireddocs: Automatically generates documentation for stories

Installation

npm install @storybook/addon-essentials

Configuration Add to storybook/main.js

module.exports = {

addons: ["@storybook/addon-essentials"],

};

- @storybook/addon-a11y

- An addon that performs accessibility checks on components

npm install @storybook/addon-a11y

Configuration Add to storybook/main.js

module.exports = {

addons: ["@storybook/addon-a11y"],

};

Apply to a Story

import { Button } from "../components/Button";

import { withA11y } from "@storybook/addon-a11y";

export default {

title: "Button",

component: Button,

decorators: [withA11y],

};

This allows you to detect accessibility issues (such as insufficient contrast or missing labels) in Storybook.

Expanding E2E Tests (Playwright / Cypress)

E2E (End-to-End) tests are a testing method for verifying the behavior of the entire application. They automate user operations in the browser to check whether the UI behaves as expected.

Browser Testing with Playwright

Features of Playwright

- Multi-browser support: Can test on major browsers such as Chromium, Firefox, and WebKit

- Headless mode support: Can run tests quickly without displaying a GUI

- Mobile emulation: Can emulate specific device environments

- Parallel execution: Can run multiple tests in parallel for efficient test runs

Introducing Playwright

npx playwright install

This installs the necessary browsers and Playwright dependencies.

Sample Test

The following sample is a test that accesses http://localhost:3000 and verifies the page title.

import { test, expect } from "@playwright/test";

test("Home page has correct title", async ({ page }) => {

await page.goto("http://localhost:3000");

await expect(page).toHaveTitle(/My App/);

});

To run the test, use the following command:

npx playwright test

E2E Testing with Cypress

Features of Cypress

- Intuitive API: Provides a simple and easy-to-understand API

- Real-time debugging: Allows you to debug while checking the UI during test execution

- Single-browser support: Strong for testing mainly on Chromium-based browsers (Chrome, Edge)

- Snapshot feature: Captures intermediate states of tests to make debugging easier

Introducing Cypress

To add Cypress to your project, run the following command:

npm install --save-dev cypress

After installation, run the following command to start Cypress:

npx cypress open

Sample Test

The following sample tests navigation from the top page (/) to the about page.

describe("Navigation", () => {

it("should navigate to the about page", () => {

cy.visit("/");

cy.get("a[href='/about']").click();

cy.url().should("include", "/about");

});

});

To run tests in headless mode, use the following command:

npx cypress run

Comparison of Playwright and Cypress

| Item | Playwright | Cypress |

|---|---|---|

| Browser support | Chromium, Firefox, WebKit | Chromium-based only |

| Parallel execution | Supported | Limited (supported in commercial version) |

| Device emulation | Supported (mobile environment emulation) | Limited |

| API simplicity | Somewhat complex | Simple and intuitive |

| Debugging features | Strong code-based debugging | Strong UI-based debugging |

| Headless mode | Supported | Supported |

| Ease of setup | More dependencies | Can run immediately after installation |

Which should you choose?

Need tests on multiple browsers → Playwright

Want to quickly write simple UI tests → Cypress

Want to use parallel execution or mobile testing → Playwright

Want visual debugging → Cypress

Playwright is a highly versatile and feature-rich tool, while Cypress is easy to use even for beginners and easy to debug. Choose according to your project requirements.

There is also a blog post on how to introduce Playwright, so please refer to it as well.

Automating API Tests (Supertest / MSW)

API Testing with Supertest

Supertest is an ideal library for testing APIs in Node.js-based applications. It is particularly suitable for verifying the behavior of Express and GraphQL API endpoints.

Introducing Supertest

Supertest is based on superagent and makes it easy to test HTTP requests. To add it to devDependencies, run:

npm install --save-dev supertest

It is commonly used in combination with test frameworks such as jest.

Basic Usage of Supertest

Below is a simple example that tests the GET /api endpoint of an Express server.

import request from "supertest";

import app from "../server";

test("GET /api returns 200", async () => {

const response = await request(app).get("/api");

expect(response.status).toBe(200);

expect(response.body).toEqual({ message: "Hello, world!" });

});

Key points

request(app).get("/api")- Sends a GET /api request to the Express app (app).

expect(response.status).toBe(200);- Verifies that the HTTP status code is 200 OK.

expect(response.body).toEqual({ message: "Hello, world!" });- Verifies the JSON content of the response.

For POST requests, you can send the request body using the send() method.

test("POST /api/data returns 201", async () => {

const response = await request(app)

.post("/api/data")

.send({ name: "Test Data" })

.set("Content-Type", "application/json");

expect(response.status).toBe(201);

expect(response.body).toEqual({ id: 1, name: "Test Data" });

});

Key points

.send({ name: "Test Data" })sends the request body.set("Content-Type", "application/json")specifies the appropriate header- Verifies the

statusandbodyof the response

Testing GraphQL APIs is also easy.

test("GraphQL query returns expected response", async () => {

const response = await request(app)

.post("/graphql")

.send({

query: `{ user(id: 1) { name email } }`

})

.set("Content-Type", "application/json");

expect(response.status).toBe(200);

expect(response.body).toEqual({

data: {

user: { name: "John Doe", email: "john@example.com" }

}

});

});

Key points

- For GraphQL requests, send

queryin JSON format - Verify the

datafield in the response

There is also a blog post on how to introduce Supertest, so please refer to it as well.

4.2 Mock API Testing with MSW

Mock Service Worker (MSW) is a library for mocking APIs in browser and Node.js environments. It is especially useful in frontend tests, allowing you to emulate API calls even when the backend is not yet implemented.

Introducing MSW

Install MSW.

npm install --save-dev msw

In the test environment, you can use msw/node to mock APIs.

import { setupServer } from "msw/node";

import { rest } from "msw";

// Define mock API

const server = setupServer(

rest.get("/api", (req, res, ctx) => {

return res(ctx.json({ message: "Hello, world!" }));

})

);

// Start the server before running tests

beforeAll(() => server.listen());

// Stop the server after tests finish

afterAll(() => server.close());

// Reset request handlers after each test

afterEach(() => server.resetHandlers());

test("GET /api returns mock response", async () => {

const response = await fetch("/api");

const data = await response.json();

expect(response.status).toBe(200);

expect(data).toEqual({ message: "Hello, world!" });

});

Key points

- Use

setupServer()to create a mock server in Node.js - Start the mock server at the beginning of tests with

server.listen() - Stop the server at the end of tests with

server.close() - Clear mock settings with

server.resetHandlers()

In browser environments, you can mock APIs using setupWorker().

import { setupWorker } from "msw";

import { rest } from "msw";

export const worker = setupWorker(

rest.get("/api", (req, res, ctx) => {

return res(ctx.json({ message: "Hello, world!" }));

})

);

// Enable mocks in development environment

worker.start();

How to use it in the frontend

- Run

worker.start();at the entry point such asindex.tsxorApp.tsx - API calls work during development without a backend

- You can check mock API requests and responses in the Network tab

When to Use Supertest and MSW

| Supertest | MSW | |

|---|---|---|

| Use case | Testing backend APIs (Express / GraphQL) | Frontend development and testing |

| Environment | Node.js | Browser & Node.js |

| Sending requests | request(app).get("/api") | fetch("/api") |

| Verifying responses | expect(response.status).toBe(200) | expect(data).toEqual({...}) |

| Need actual backend implementation | Required (actual API must run) | Not required (can emulate APIs) |

Key points

- Supertest is best for testing backend API endpoints

- MSW is convenient for mocking APIs in frontend development and testing

- Both can be combined with test environments such as Jest and Playwright

There is also a blog post on how to introduce MSW, so please refer to it as well.

Organizing and Optimizing Mock Data

When frontend and backend development are not in sync, or when building a stable test environment, organizing and optimizing mock data becomes an important issue. It is particularly effective to focus on the following points:

Generating Dummy Data with Faker

By using Faker, you can easily generate realistic test data. However, if different values are generated each time, tests become unstable, so use faker.seed() to output consistent data.

Install @faker-js/faker.

npm install @faker-js/faker

Basic dummy data generation

import { faker } from '@faker-js/faker';

// Seed value for generating predictable data

faker.seed(123);

const user = {

id: faker.string.uuid(),

name: faker.person.fullName(),

email: faker.internet.email(),

phone: faker.phone.number(),

};

console.log(user);

Running this code generates the same data each time, preserving test reproducibility.

Conversely, if you want to create non-duplicated data, it is effective to use faker.helpers.unique().

const uniqueEmails = new Set();

for (let i = 0; i < 5; i++) {

uniqueEmails.add(faker.helpers.unique(faker.internet.email));

}

console.log([...uniqueEmails]); // Array of unique email addresses

Applying GraphQL Mock

When using GraphQL, you can use @graphql-tools/mock to build a mock server so that frontend development can proceed even when the backend API is not yet complete.

Install the necessary libraries.

npm install @graphql-tools/mock @graphql-tools/schema graphql

Create a GraphQL schema and provide mock data.

import { makeExecutableSchema } from '@graphql-tools/schema';

import { addMocksToSchema } from '@graphql-tools/mock';

import { graphql } from 'graphql';

import { faker } from '@faker-js/faker';

// Definition of GraphQL schema

const typeDefs = `

type User {

id: ID!

name: String!

email: String!

}

type Query {

users: [User!]!

}

`;

// Definition of mock responses

const mocks = {

User: () => ({

id: faker.string.uuid(),

name: faker.person.fullName(),

email: faker.internet.email(),

}),

};

// Create schema with mocks

const schema = makeExecutableSchema({ typeDefs });

const schemaWithMocks = addMocksToSchema({ schema, mocks });

// Execute query

const query = `

query {

users {

id

name

email

}

}

`;

graphql({ schema: schemaWithMocks, source: query }).then((result) =>

console.log(JSON.stringify(result, null, 2))

);

Key points

- Since you can provide mocks based on the

GraphQLschema, you can develop in line with API design. - By using

@graphql-tools/mock, you can dynamically change responses.

Applying Custom Resolvers to Provide Dynamic Data

By default, @graphql-tools/mock may return the same data repeatedly. To create more realistic behavior, you can apply custom resolvers.

import { makeExecutableSchema } from '@graphql-tools/schema';

import { addMocksToSchema } from '@graphql-tools/mock';

import { graphql } from 'graphql';

import { faker } from '@faker-js/faker';

// Schema definition

const typeDefs = `

type User {

id: ID!

name: String!

email: String!

}

type Query {

users: [User!]!

}

`;

// Definition of custom resolvers

const mocks = {

Query: {

users: () => {

return Array.from({ length: 5 }).map(() => ({

id: faker.string.uuid(),

name: faker.person.fullName(),

email: faker.internet.email(),

}));

},

},

};

// Create schema with mocks

const schema = makeExecutableSchema({ typeDefs });

const schemaWithMocks = addMocksToSchema({ schema, mocks });

// Execute query

const query = `

query {

users {

id

name

email

}

}

`;

graphql({ schema: schemaWithMocks, source: query }).then((result) =>

console.log(JSON.stringify(result, null, 2))

);

Benefits

- You can generate different data for each query.

- You can dynamically customize data, allowing you to increase test scenarios.

There is also a blog post on using @graphql-tools/mock and Faker, so please refer to it as well.

Optimizing Test Execution Flow in CI/CD

To shorten test execution time in CI/CD, parallel execution and caching are essential.

Parallel Test Execution

Parallel test execution can significantly reduce CI/CD runtime.

Parallel execution in Jest

Jest performs parallel execution by default, but you can control the degree of parallelism by adjusting --max-workers.

jest --max-workers=50%

--max-workers=50%: Uses 50% of CPU (parallel execution while reducing load)--max-workers=4: Runs with a maximum of 4 workers

You can also set this dynamically by considering CI environment variables.

jest --max-workers=$(nproc)

(nproc is a Linux command that gets the number of available CPU cores)

Parallel Execution Using GitHub Actions Matrix

In GitHub Actions, you can use matrix to run different test cases in parallel.

For example, you can group test files and run each group in parallel as follows:

jobs:

test:

runs-on: ubuntu-latest

strategy:

matrix:

shard: [1, 2, 3, 4] # Split into 4

steps:

- name: Checkout repository

uses: actions/checkout@v3

- name: Setup Node.js

uses: actions/setup-node@v3

with:

node-version: 18

cache: "npm"

- name: Install dependencies

run: npm ci

- name: Run tests (sharded)

run: |

TEST_FILES=$(jest --listTests | sort | awk "NR % 4 == $(( ${{ matrix.shard }} - 1 ))")

jest $TEST_FILES --max-workers=2

- Use

jest --listTeststo get all test files and split them into 4 parts for parallel execution. - Set the upper limit of parallel execution to 2 with

--max-workers=2.

Using Cache

To speed up test execution, it is important to use caching appropriately.

Caching node_modules

You can cache node_modules to speed up dependency installation.

In GitHub Actions

- name: Cache node_modules

uses: actions/cache@v3

with:

path: ~/.npm

key: npm-${{ hashFiles('package-lock.json') }}

restore-keys: |

npm-

- The cache is used as long as

package-lock.jsondoes not change.

In CircleCI

- restore_cache:

keys:

- npm-deps-{{ checksum "package-lock.json" }}

- run: npm ci

- save_cache:

key: npm-deps-{{ checksum "package-lock.json" }}

paths:

- ~/.npm

Jest Cache

Jest can cache test results, so enabling --cache allows you to skip unnecessary tests on reruns.

jest --cache

You can also save the cache directory in GitHub Actions to use it in the next run.

- name: Cache Jest cache

uses: actions/cache@v3

with:

path: .jest/cache

key: jest-${{ github.run_id }}

restore-keys: |

jest-

Prisma Cache

Since prisma generate generates TypeScript types from the DB schema, it is costly to run, but you can use caching.

In GitHub Actions

- name: Cache Prisma

uses: actions/cache@v3

with:

path: node_modules/.prisma

key: prisma-${{ hashFiles('prisma/schema.prisma') }}

restore-keys: |

prisma-

In CircleCI

- restore_cache:

keys:

- prisma-cache-{{ checksum "prisma/schema.prisma" }}

- run: npx prisma generate

- save_cache:

key: prisma-cache-{{ checksum "prisma/schema.prisma" }}

paths:

- node_modules/.prisma

Test Design with Dependency Management (Separating Tests by Environment)

To improve test reliability, it is important to separate tests by environment and manage them appropriately.

Ideally, you should separate environments by test type and clearly control dependencies as follows:

Unit Tests

- Purpose

- Verify that individual functions and classes behave as expected.

- Dependencies

- Do not depend on external resources (DB, API, file system, etc.); mock everything.

- Execution environment

- Run quickly on local machines (can also run in parallel in CI)

Design points

- Mock external dependencies (DB, API, storage)

- Stateless, independent test cases

- Minimal test data

- Fast execution (from a few ms to a few hundred ms)

Example: Mocking API responses

import { fetchUser } from '../src/userService';

import axios from 'axios';

jest.mock('axios');

describe('fetchUser', () => {

it('should return user data', async () => {

(axios.get as jest.Mock).mockResolvedValue({ data: { id: 1, name: 'Alice' } });

const user = await fetchUser(1);

expect(user).toEqual({ id: 1, name: 'Alice' });

});

});

Key points

- Mock axios to eliminate dependency on external APIs

- Guarantee the behavior of the function alone without connecting to the database

Integration Tests

- Purpose

- Verify that multiple components and services work together correctly.

- Dependencies

- Use actual databases and APIs, but make them switchable by environment.

- Execution environment

- Use docker-compose in CI/CD to unify dependencies.

Design points

- Test connections with actual DBs and APIs

- Set up and clean up data

- Build the same environment locally and in CI (unify with Docker)

Example) Integration test using PostgreSQL

import { PrismaClient } from '@prisma/client';

const prisma = new PrismaClient();

describe('User Repository Integration Test', () => {

beforeAll(async () => {

await prisma.$connect();

});

afterAll(async () => {

await prisma.$disconnect();

});

beforeEach(async () => {

await prisma.user.deleteMany(); // Reset data

});

it('should create and retrieve a user', async () => {

await prisma.user.create({ data: { name: 'Alice' } });

const users = await prisma.user.findMany();

expect(users).toHaveLength(1);

expect(users[0].name).toBe('Alice');

});

});

Key points

- Actually operate the database and check end-to-end behavior

- Reset data in beforeEach to prevent tests from affecting each other

Unify DB with Docker.

version: '3.8'

services:

postgres:

image: postgres:14

environment:

POSTGRES_USER: test

POSTGRES_PASSWORD: test

POSTGRES_DB: test_db

ports:

- "5432:5432"

Key points

- Build a unified environment with

docker-compose upboth locally and in CI

How to define PostgreSQL connection

- Define a test database in .env.test

For integration tests, it is recommended to use a dedicated test database separate from production data. Therefore, create a test-specific .env.test and separate the connection.

Example of .env.test

DATABASE_URL=postgresql://test_user:test_password@localhost:5432/test_db?schema=public

- Apply .env.test when running Jest

Prisma reads .env by default, but to apply .env.test for the test environment, load dotenv in Jest’s setupFiles.

Create jest.setup.ts

import dotenv from 'dotenv';

// Load `.env.test` with priority

dotenv.config({ path: '.env.test' });

Then specify this file in Jest’s setupFiles.

jest.config.js

module.exports = {

setupFiles: ['<rootDir>/jest.setup.ts'],

};

E2E Tests (End-to-End Tests)

- Purpose

- Simulate user operations and verify that the entire system behaves as expected.

- Dependencies

- Prepare a production-like environment (staging) and use actual APIs and DBs.

- Execution environment

- Separate

stagingandproductionand switch via environment variables. - Use

PlaywrightorCypress.

- Separate

Design points

- Automate browser operations and test actual user behavior

- Run in the

stagingenvironment, separate fromproduction - Save logs and screenshots for easier debugging

Example) Login test using Playwright

import { test, expect } from '@playwright/test';

test('Verify behavior when login succeeds', async ({ page }) => {

await page.goto('https://staging.example.com/login');

await page.fill('input[name="email"]', 'test@example.com');

await page.fill('input[name="password"]', 'password123');

await page.click('button[type="submit"]');

await expect(page).toHaveURL('https://staging.example.com/dashboard');

});

Key points

- Automate browser operations and test actual behavior

- Switch between staging and production with environment variables

Switching endpoints with environment variables

BASE_URL=https://staging.example.com

BASE_URL=https://example.com

Summary of Test Types

| Test type | Purpose | Dependencies | Execution environment |

|---|---|---|---|

| Unit tests | Verify behavior of individual functions/classes | All mocked | Local, CI |

| Integration tests | Verify cooperation between services | Use actual DBs/APIs | CI (docker-compose) |

| E2E tests | Simulate user operations | Use staging environment |

staging or production |

Visualizing Test Coverage (Applying Codecov / SonarQube)

Visualizing test coverage is essential for maintaining and improving code quality. In particular, by using the two tools Codecov and SonarQube, you can better understand the state of your code.

Using Codecov

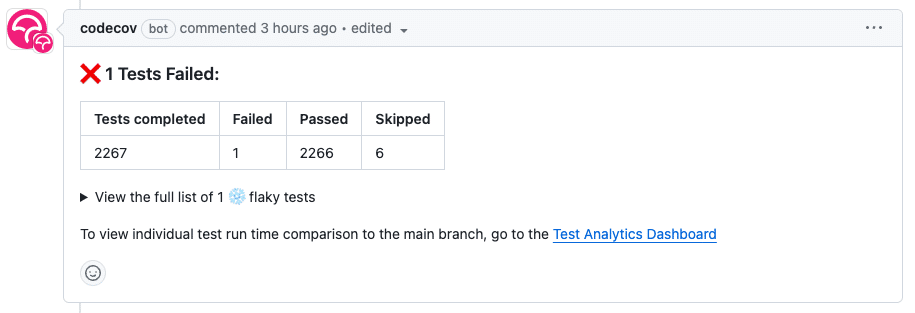

Codecov is a service that visualizes test coverage (which code is tested) and tracks coverage changes by integrating with repositories such as GitHub.

- Visualizes coverage changes per PR

- Can integrate with multiple CI/CD systems

- Automatically posts coverage reports as comments on GitHub

- Analyzes coverage trends on a web dashboard

Benefits of managing coverage with Codecov in CI/CD include:

- Display coverage changes in GitHub PRs

- Check past coverage history in the web UI

- Prevent overall project coverage from dropping

- Integrate with multiple test frameworks (Jest, Mocha, Pytest, etc.)

- Setting up Codecov

To use Codecov in your project, follow these steps:

Create a Codecov account

Connect GitHub on the official Codecov site and register your repository.

- Add codecov-action to GitHub Actions

Add codecov-action to your GitHub Actions workflow and upload coverage reports after running tests.

Example: GitHub Actions (.github/workflows/test.yml)

name: Run Tests and Upload Coverage

on: [push, pull_request]

jobs:

test:

runs-on: ubuntu-latest

steps:

- name: Checkout code

uses: actions/checkout@v3

- name: Setup Node.js

uses: actions/setup-node@v3

with:

node-version: 18

- name: Install dependencies

run: npm install

- name: Run tests with coverage

run: npm test -- --coverage

- name: Upload coverage to Codecov

uses: codecov/codecov-action@v3

with:

token: ${{ secrets.CODECOV_TOKEN }} # Codecov token (register in repository Secrets)

- Coverage settings in jest.config.js

If you are using Jest, add settings to jest.config.js to output coverage.

module.exports = {

collectCoverage: true,

collectCoverageFrom: ["src/**/*.{js,jsx,ts,tsx}"],

coverageDirectory: "coverage",

coverageReporters: ["json", "lcov", "text", "clover"],

};

- Checking Codecov reports

When you create a PR, Codecov automatically generates a coverage report that you can check on GitHub.

- Coverage rate per file

- Line-by-line coverage

- Coverage increase/decrease due to changes

You can also display analysis results in PRs.

Reference:

When should you use Codecov?

| Situation | Jest alone is enough | Codecov is useful |

|---|---|---|

| Checking coverage locally | ✅ | ❌ |

| Small-scale project (personal) | ✅ | ❌ |

| Want to integrate coverage into CI/CD | △ (possible but cumbersome) | ✅ |

| Want to check coverage changes per PR | ❌ | ✅ |

| Want to set thresholds (fail CI if below 80%) | △ (possible but cumbersome) | ✅ |

| Want to see coverage history | ❌ | ✅ |

Introducing SonarQube

SonarQube is a tool that not only measures test coverage but also detects code quality issues and security holes and performs static analysis.

- Choose SonarCloud or SonarQube

SonarCloud (cloud version)

SonarQube (on-premises version)

- Install sonar-scanner

npm install sonar-scanner

- Create SonarQube configuration file (

sonar-project.properties)

sonar.projectKey=your_project_key

sonar.organization=your_organization

sonar.host.url=https://sonarcloud.io

sonar.token=your_sonar_token

sonar.sources=src

sonar.exclusions=**/*.spec.js, **/*.test.js

sonar.tests=tests

sonar.test.inclusions=**/*.spec.js, **/*.test.js

sonar.javascript.lcov.reportPaths=coverage/lcov.info

- Run sonar-scanner in GitHub Actions

- name: Run SonarQube Scan

run: sonar-scanner

- Check SonarQube reports

- Code quality score

- Bugs and security holes

- Code duplication rate

Tests Considering Feature Flags

To test new features and existing features while they coexist, you need a strategy that takes feature flags into account.

In small projects, you can manage feature flags using environment variables.

Example: .env

FEATURE_NEW_DASHBOARD=true

Example: config.ts

export const featureFlags = {

newDashboard: process.env.FEATURE_NEW_DASHBOARD === "true",

};

Tests Considering Feature Flags

It is important to prepare test cases according to whether feature flags are ON or OFF.

- Managing feature flags

First, implement a function to manage the state of feature flags.

Example: featureFlags.ts

export const featureFlags = {

newAlgorithm: process.env.FEATURE_NEW_ALGORITHM === "true",

};

export function isFeatureEnabled(flag: keyof typeof featureFlags): boolean {

return featureFlags[flag];

}

- Define feature flags in

featureFlags(managed via environment variables) - Use

isFeatureEnabled(flag: string)to determine whether a flag is ON or OFF

- Function that uses feature flags

Next, implement a function that changes behavior based on feature flags.

Example: calculateDiscount.ts

import { isFeatureEnabled } from "./featureFlags";

export function calculateDiscount(price: number): number {

if (isFeatureEnabled("newAlgorithm")) {

// Apply new algorithm

return price * 0.9; // 10% discount

} else {

// Apply old algorithm

return price * 0.95; // 5% discount

}

}

- Use

isFeatureEnabled("newAlgorithm")to check the flag - 10% discount if the flag is ON

- 5% discount if the flag is OFF

- Test the implemented calculateDiscount() function by switching the feature flag ON/OFF.

import { calculateDiscount } from "../calculateDiscount";

import * as featureFlags from "../featureFlags"; // Import the module

describe("calculateDiscount", () => {

afterEach(() => {

jest.restoreAllMocks(); // Reset mocks

});

test("When the feature flag is ON, the new algorithm (10% discount) is applied", () => {

jest.spyOn(featureFlags, "isFeatureEnabled").mockReturnValue(true);

expect(calculateDiscount(1000)).toBe(900); // 10% off 1000 yen → 900 yen

});

test("When the feature flag is OFF, the old algorithm (5% discount) is applied", () => {

jest.spyOn(featureFlags, "isFeatureEnabled").mockReturnValue(false);

expect(calculateDiscount(1000)).toBe(950); // 5% off 1000 yen → 950 yen

});

});

Key points

- Use

jest.spyOn(featureFlags, "isFeatureEnabled")to mock the flag ON/OFF - Flag ON: 10% discount

- Flag OFF: 5% discount

- Reset mocks in afterEach to avoid affecting other tests

Conclusion

As projects grow in size, test automation becomes an indispensable element. However, it is not enough to simply increase the number of tests; it is important to introduce them with an appropriate strategy and then operate and optimize them.

This article introduced practical test strategies from unit tests to E2E tests, API tests, mock data management, and test optimization in CI/CD. By applying these methods, you can improve quality without slowing down development speed.

Tests are not “write once and done,” but something to be “operated while continuously improving.” Use appropriate tools and methods to build a test strategy that fits your project.

Questions about this article 📝

If you have any questions or feedback about the content, please feel free to contact us.Go to inquiry form

Related Articles

Complete Guide to Web Accessibility: From Automated Testing with Lighthouse / axe and Defining WCAG Criteria to Keyboard Operation and Screen Reader Support

2023/11/21Robust Authorization Design for GraphQL and REST APIs: Best Practices for RBAC, ABAC, and OAuth 2.0

2024/05/13Introduction to Automating Development Work: A Complete Guide to ETL (Python), Bots (Slack/Discord), CI/CD (GitHub Actions), and Monitoring (Sentry/Datadog)

2024/02/12Complete Cache Strategy Guide: Maximizing Performance with CDN, Redis, and API Optimization

2024/03/07Chat App (with Image/PDF Sending and Video Call Features)

2024/07/15CI/CD Strategies to Accelerate and Automate Your Development Flow: Leveraging Caching, Parallel Execution, and AI Reviews

2024/03/12Practical Component Design Guide with React × Tailwind CSS × Emotion: The Optimal Approach to Design Systems, State Management, and Reusability

2024/11/22Strengthening Dependency Security: Best Practices for Vulnerability Scanning, Automatic Updates, and OSS License Management

2024/01/29