Practical Microservices Strategy: The Tech Stack Behind BFF, API Management, and Authentication Platform (AWS, Keycloak, gRPC, Kafka)

This post is also available in 日本語.

Introduction

In recent years, many companies have adopted microservices architecture to improve system scalability and development efficiency. However, it is not enough to simply split services; you must also address many challenges such as proper API design, choosing communication methods, unifying authentication and authorization, and optimizing asynchronous processing.

In this article, we introduce technologies that can be used in real-world system design, such as introducing BFF (Backend for Frontend), managing endpoints using an API Gateway, inter-service communication with gRPC, asynchronous messaging with Kafka and RabbitMQ, building an authentication platform with Keycloak, and standardizing API documentation with Swagger.

We will explain the challenges you face when introducing and operating microservices and their solutions, along with concrete examples. We hope this will be useful for those who are about to move to microservices or engineers aiming for more sophisticated designs.

Formulating a Microservices Strategy

By introducing microservices architecture, you can expect improved scalability and development speed. However, if you introduce it without a proper strategy, operational and management complexity will increase and may even reduce development efficiency.

Overview of Microservices Architecture

Microservices Architecture (MSA) is an architectural style that splits a single large system (monolith) into multiple independent services. Each service can be deployed independently and can adopt a different technology stack.

- Advantages

- Improved development speed

- Since each service is independent, multiple teams can develop in parallel.

- Scalability

- You can scale up/down only the services that need it.

- Technological diversity

- You can choose the optimal technology for each service (e.g., some parts in Node.js, others in Go).

- Improved development speed

- Disadvantages

- Increased operational complexity

- You need to design inter-service communication (API Gateway, gRPC, etc.).

- Distributed data management

- You need a strategy for how each service shares data.

- Increased operational complexity

Clarifying the Purpose of Introducing Microservices

Clarifying the purpose of introduction makes it easier to design appropriately.

Improving Development Speed

-

Independent deployments are possible (automated with CI/CD)

-

Different teams can develop in parallel

-

Example: Split REST APIs into independent services

For example, by separating “Order Management” and “User Management” in an e-commerce site, different development teams can release independently.

// orders-service (Order Management API)

import express from "express";

const app = express();

app.get("/orders", (req, res) => {

res.json([{ id: 1, item: "Laptop", status: "Shipped" }]);

});

app.listen(3001, () => console.log("Orders Service running on port 3001"));

// users-service (User Management API)

import express from "express";

const app = express();

app.get("/users", (req, res) => {

res.json([{ id: 1, name: "John Doe" }]);

});

app.listen(3002, () => console.log("Users Service running on port 3002"));

Scalability

- You can scale high-load services individually (horizontal scaling).

- Example: If the order processing API has heavy traffic, scale only the order service.

Example with Kubernetes

Deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: orders-service

spec:

replicas: 2 # Equivalent to DesiredCapacity (initial value before HPA overwrites it)

selector:

matchLabels:

app: orders

template:

metadata:

labels:

app: orders

spec:

containers:

- name: orders

image: ghcr.io/example/orders:1.2.3

ports:

- containerPort: 8080

resources:

requests:

cpu: "200m"

memory: "256Mi"

limits:

cpu: "1"

memory: "512Mi"

readinessProbe:

httpGet:

path: /healthz

port: 8080

livenessProbe:

httpGet:

path: /livez

port: 8080

Service

apiVersion: v1

kind: Service

metadata:

name: orders-service

spec:

selector:

app: orders

ports:

- port: 80

targetPort: 8080

type: ClusterIP

HPA

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: orders-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: orders-service

minReplicas: 1 # MinSize

maxReplicas: 5 # MaxSize

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70 # Example: target average CPU 70%

- DesiredCapacity → Deployment.spec.replicas (initial value). Actual auto scaling is handled by the HPA.

- MinSize/MaxSize → HPA.spec.minReplicas / maxReplicas.

- LaunchTemplate → Container image + resources settings (Pod spec). Node startup definitions are the responsibility of the cluster/node pool in k8s.

Technological Diversity

- Frontend with Next.js (React), backend with NestJS (TypeScript)

- Some services can be implemented in Go or Python

- Example: ML processing in Python, API in Go

from flask import Flask, jsonify

app = Flask(__name__)

@app.route("/predict", methods=["GET"])

def predict():

return jsonify({"prediction": "Positive"})

app.run(port=5000)

Migration Strategy from a Monolith

Introducing microservices all at once makes operations difficult, so a phased migration is important.

Applying the Strangler Pattern

- Split parts of the existing monolith into microservices.

- Gradually migrate monolith functions to new microservices.

Migration image

- Add a proxy in front of the monolith API.

- The new microservice takes over processing.

- Gradually shrink the monolith.

Data Management Strategy

- Move from a single monolith DB → to a DB per microservice.

- Each service has its own independent data store (Database per service).

Defining the Migration Period

| Phase | Work content |

|---|---|

| 1. Investigation | Analyze the existing system |

| 2. Split design | Plan service decomposition |

| 3. Implementation | API development, data splitting |

| 4. Verification | Load testing, monitoring setup |

| 5. Migration complete | Apply to production environment |

BFF Introduction Plan

BFF (Backend for Frontend) is an architectural pattern where you prepare a backend optimized for each frontend. You can design dedicated APIs for different clients such as mobile apps, web apps, and admin consoles, which increases development flexibility and optimizes API responses.

Benefits of Introducing BFF

By introducing BFF, you gain the following advantages:

- API design optimized for each client

- You can design APIs tailored to frontend requirements, omitting unnecessary data and providing the necessary data appropriately.

- For example, you can minimize data size for mobile app APIs and provide richer data for web app APIs, enabling optimization for each use case.

- Improved independence between frontend and backend

- Changes on the frontend side do not directly force changes on the backend, increasing development flexibility.

- Since the BFF acts as an intermediary between the client and backend, it can absorb the impact of backend API changes.

- Reduced backend load

- The BFF can aggregate multiple API requests and reduce the number of requests to the backend.

- By performing data transformation and caching at the BFF, you can reduce the load on the backend.

Key Points for Implementing BFF

- Consider adopting GraphQL or REST API

- In the case of GraphQL

- Clients can flexibly specify the data they retrieve, preventing over-fetching (retrieving unnecessary data) and under-fetching (insufficient data).

- Suitable when the BFF integrates multiple backend APIs.

- In the case of REST API

- If you design endpoints appropriately for each client, you can manage APIs simply.

- Easy to integrate with existing REST APIs and has a lower introduction cost than GraphQL.

- In the case of GraphQL

- Integration with API Gateway

- By combining BFF with an API Gateway, you can centrally manage security and authentication and reduce operational burden.

- If you control BFF request routing at the API Gateway, you can easily separate BFFs for different frontends.

- Introducing a caching strategy

- Cache frequently accessed data on the BFF side to reduce requests to the backend.

- Use Redis or in-memory cache to improve response speed.

Points to Note When Introducing BFF

- Increased maintenance burden

- If you design a BFF for each frontend, the number of APIs may grow too large and become difficult to manage.

- You need to unify API design and share what can be shared.

- Consideration of deployment and scaling

- Since you need to deploy different BFFs for each frontend, proper CI/CD management is important.

- As a scaling strategy, consider a mechanism that allows you to scale up high-traffic BFFs individually.

Optimizing Endpoint Management Using an API Gateway

In a microservices architecture, many services provide APIs, so it is important to use an API Gateway to optimize endpoint management.

Role of the API Gateway

In a microservices architecture, the API Gateway sits between the client and multiple microservices and centrally manages endpoints. This simplifies the management of complex APIs and improves security and performance.

- Request routing

- Forwards client requests to the appropriate microservice.

- Supports path-based routing (e.g., /users to the User service) and host-based routing (e.g., different backends from api.example.com).

- Centralized authentication and authorization

- Performs authentication at the API Gateway and blocks invalid requests.

- Can apply OAuth2, JWT (JSON Web Token), API key authentication, IP restrictions, etc.

- Reduces the burden of implementing authentication in each microservice.

- Response aggregation (federation)

- Aggregates responses from multiple microservices and returns them as a single response.

- For example, a /user-info request can retrieve both user information and order history at once.

- Combined with API approaches like GraphQL, it reduces the number of requests from the frontend.

- Load balancing

- Distributes load to backend services and improves performance.

- Controls the number of client connections and provides an environment that is easy to auto-scale.

- Enhanced security

- Can set rate limiting to protect against DDoS attacks and overload.

- Manages CORS (Cross-Origin Resource Sharing) settings to restrict external requests.

Key Points for Implementing an API Gateway

When introducing an API Gateway, selecting the right tool and configuring it properly are important.

Selecting an API Gateway

- AWS API Gateway:

- Fully managed and ideal for serverless architectures (Lambda integration).

- Can integrate authentication (Cognito, IAM), caching, rate limiting, etc.

- NGINX:

- Works as a lightweight, high-speed reverse proxy and can serve as an API Gateway.

- With OpenResty, you can achieve even more advanced control.

Leveraging Caching Features

- Cache frequently requested data to shorten response time.

- Use the caching features of the API Gateway (AWS API Gateway cache, Kong Proxy Cache).

- Combining with a CDN (CloudFront, Fastly) further improves performance.

Setting Rate Limits

- Limit the number of requests per second to prevent DDoS attacks and overload.

- Example: Use the rate-limiting plugin in Kong, or the “throttling” feature in AWS API Gateway.

Introducing Logging and Monitoring

- Log requests passing through the API Gateway to detect anomalies.

- Use AWS CloudWatch, Datadog, Prometheus, etc.

- Measure latency per request to identify bottlenecks.

Defining Service Decomposition and Its Sequence

In a microservices architecture, proper service decomposition is extremely important. If decomposition is inappropriate, development complexity increases and system scalability and operations may become difficult.

Criteria for Service Decomposition

The main criteria to consider when decomposing services include the following:

- Decomposition by business domain (Domain-Driven Design, DDD)

- Use the concepts of Domain-Driven Design (DDD) and basically decompose services by business use case.

- Each service has a “Bounded Context” and manages its own business rules.

- Example:

- For an e-commerce site:

- Order Management Service

- Inventory Management Service

- Payment Service

- User Management Service

- For an e-commerce site:

- Clarifying data ownership (one service, one data)

- Make one service own specific data to make it easier to manage data consistency.

- Avoid structures where “Service A writes data that Service B can directly update.”

- When data is shared, use APIs or event notifications.

- Example:

- The User Management Service manages user information (email, address, etc.).

- The Order Management Service manages order information and queries the User Management Service when needed.

- Independence of development teams

- Create a state where each service can be developed and deployed independently to improve development productivity.

- Each service can have its own repository and adopt a different technology stack.

- Example:

- Order Management Service:

Java + Spring Boot - Payment Service:

Node.js + Express - Analytics Service:

Python + FastAPI

- Order Management Service:

Data Consistency Between Services

When you decompose services, data becomes distributed, so how to maintain data consistency becomes important.

- Event-driven architecture (Event Sourcing)

- A method where “when a data change occurs, that event is notified to other services.”

- Often uses message queues such as

Kafka,RabbitMQ,Amazon SNS/SQS. - Example:

- The

Order Management Servicepublishes an “Order Completed” event. - The

Inventory Management Servicereceives the event and decreases inventory. - The

Payment Serviceprocesses the order and completes payment.

- The

- Data replication and synchronization strategy

- A strategy where you do not share databases but copy part of the data between services.

- Consider eventual consistency and decide whether to update data in real time or via batch processing.

- Using cache or CQRS (Command Query Responsibility Segregation) is also effective.

- Example:

- When there is a change in the

User Management Service, synchronize part of the data to theOrder Management Service. - Store temporary cache in

RedisorElasticsearchto reduce latency.

- When there is a change in the

Evaluating Communication Methods Between Services

In a microservices architecture, choosing the right communication method between services is extremely important. By choosing the right communication method, you can improve system performance, scalability, and fault tolerance.

Selecting Communication Methods

Inter-service communication methods can be broadly classified into the following three types:

Synchronous Communication

A method where the client sends a request and waits until it receives a response.

- REST (HTTP API)

- Simple and widely adopted

- Exchanges data in JSON or XML format

- Uses HTTP methods (GET, POST, PUT, DELETE, etc.)

Express + Axios (REST API)

import express, { Request, Response } from "express";

import axios from "axios";

const app = express();

const PORT = 3000;

app.get("/users/:id", async (req: Request, res: Response) => {

const { id } = req.params;

try {

// HTTP request to another microservice (order-service)

const orderResponse = await axios.get(`http://localhost:4000/orders/${id}`);

res.json({ userId: id, orders: orderResponse.data });

} catch (error) {

res.status(500).json({ error: "Failed to fetch orders" });

}

});

app.listen(PORT, () => console.log(`User Service running on port ${PORT}`));

import express, { Request, Response } from "express";

const app = express();

const PORT = 4000;

app.get("/orders/:userId", (req: Request, res: Response) => {

const { userId } = req.params;

const orders = [{ id: 1, item: "Laptop" }, { id: 2, item: "Phone" }];

res.json(orders);

});

app.listen(PORT, () => console.log(`Order Service running on port ${PORT}`));

- Access from

user-servicetoorder-service

gRPC

- Advantages: Enables high-speed communication (binary format).

- Disadvantages: Configuration is a bit more complex.

syntax = "proto3";

package orderService;

service OrderService {

rpc GetOrder (OrderRequest) returns (OrderResponse);

}

message OrderRequest {

string id = 1;

}

message OrderResponse {

string id = 1;

string item = 2;

}

gRPC server

import grpc from "@grpc/grpc-js";

import protoLoader from "@grpc/proto-loader";

const PROTO_PATH = "order.proto";

const packageDefinition = protoLoader.loadSync(PROTO_PATH);

const orderProto = grpc.loadPackageDefinition(packageDefinition) as any;

const orders = [{ id: "1", item: "Laptop" }, { id: "2", item: "Phone" }];

const server = new grpc.Server();

server.addService(orderProto.orderService.OrderService.service, {

GetOrder: (call: any, callback: any) => {

const order = orders.find(o => o.id === call.request.id);

callback(null, order || { id: "", item: "Not Found" });

},

});

server.bindAsync("0.0.0.0:50051", grpc.ServerCredentials.createInsecure(), () => {

console.log("gRPC Server running on port 50051");

});

gRPC client

import grpc from "@grpc/grpc-js";

import protoLoader from "@grpc/proto-loader";

const PROTO_PATH = "order.proto";

const packageDefinition = protoLoader.loadSync(PROTO_PATH);

const orderProto = grpc.loadPackageDefinition(packageDefinition) as any;

const client = new orderProto.orderService.OrderService("localhost:50051", grpc.credentials.createInsecure());

client.GetOrder({ id: "1" }, (err: any, response: any) => {

if (err) console.error(err);

else console.log(response);

});

Asynchronous Communication

A method where a service can continue processing after sending a request without waiting for a response.

RabbitMQ and Kafka both function as message brokers and enable asynchronous message processing, but their design philosophies and use cases differ.

RabbitMQ (Message Queue)

Characteristics

- Lightweight message queue system

- A producer (sender) sends messages and a consumer (receiver) receives them

- Uses AMQP (Advanced Message Queuing Protocol)

- Supports message routing and priority management

- Flexible queue control for enterprise use

Use cases

- Request-response type processing

- When messages must be processed reliably

- Workflows that require transaction management

- Sending small volumes of messages with low latency

Sample RabbitMQ code (TypeScript)

Producer

import amqplib from "amqplib";

async function sendToQueue() {

const connection = await amqplib.connect("amqp://localhost");

const channel = await connection.createChannel();

const queue = "orderQueue";

await channel.assertQueue(queue, { durable: true });

channel.sendToQueue(queue, Buffer.from(JSON.stringify({ orderId: 123 })));

console.log("Sent order 123 to queue");

setTimeout(() => connection.close(), 500);

}

sendToQueue();

Consumer

import amqplib from "amqplib";

async function receiveFromQueue() {

const connection = await amqplib.connect("amqp://localhost");

const channel = await connection.createChannel();

const queue = "orderQueue";

await channel.assertQueue(queue, { durable: true });

channel.consume(queue, (msg) => {

if (msg) {

const order = JSON.parse(msg.content.toString());

console.log("Received order:", order);

channel.ack(msg);

}

});

}

receiveFromQueue();

Kafka

Characteristics

- Distributed message broker for large-scale data stream processing

- Ideal for Event-Driven Architecture (EDA)

- Log-based message storage

- Uses a Pub/Sub model

- Ultra-high throughput

Use cases

- Event-Driven Architecture (EDA)

- Stream data processing (log analysis, real-time processing)

- Asynchronous communication between microservices

- Data sharing in distributed systems

Sample Kafka code (TypeScript)

Producer

import { Kafka } from "kafkajs";

const kafka = new Kafka({

clientId: "order-service",

brokers: ["localhost:9092"]

});

async function produceMessage() {

const producer = kafka.producer();

await producer.connect();

await producer.send({

topic: "order-events",

messages: [{ value: JSON.stringify({ orderId: "123", status: "Created" }) }],

});

await producer.disconnect();

}

produceMessage();

Consumer

import { Kafka } from "kafkajs";

const kafka = new Kafka({

clientId: "order-service",

brokers: ["localhost:9092"]

});

async function consumeMessage() {

const consumer = kafka.consumer({ groupId: "order-group" });

await consumer.connect();

await consumer.subscribe({ topic: "order-events", fromBeginning: true });

await consumer.run({

eachMessage: async ({ message }) => {

console.log("Received:", message.value.toString());

},

});

}

consumeMessage();

Integrating Complex Authentication and Authorization

Why Unified Authentication and Authorization Are Necessary

In a microservices architecture, if each service manages authentication and authorization independently, the following problems arise:

- Distributed management of authentication information

- If each service has its own authentication mechanism, it becomes difficult to maintain consistency of user information.

- Example: A password is changed in one service but not reflected in another.

- Duplication and complexity of authorization rules

- If each service implements its own authorization rules, the same rules must be managed across multiple services, making consistency difficult.

- Example: You change a rule “only admins can edit” in one service, but another service still uses the old rule.

- Security risks

- If authentication and authorization implementations are not unified, services with vulnerabilities are more likely to be targeted.

- Example: A particular microservice still uses an old authentication method (such as Basic authentication) and becomes a target of attack.

To solve these issues, you need a mechanism to centrally manage authentication and authorization.

Adopting OAuth 2.0 + OpenID Connect

By combining OAuth 2.0 and OpenID Connect (OIDC), you can unify authentication and authorization safely and simply.

Role of OAuth 2.0

OAuth 2.0 is a protocol that allows third-party apps to receive delegated permission from users to access resources. This allows users to authorize access using tokens without giving their credentials (ID/password) directly to the app.

In particular, the Authorization Code Flow is widely used in web and mobile apps because the access token is not passed directly to the browser but obtained via the server.

- Authorization Code Flow

- Commonly used in web and mobile apps.

- The client (app) delegates authentication to an Identity Provider (IdP) and obtains tokens.

- Advantage: The app does not need to handle user credentials (ID/password), which is safer.

Role of OpenID Connect (OIDC)

OpenID Connect (OIDC) is a specification that extends OAuth 2.0 with “user authentication.” With OIDC, you can obtain user identity information via an ID token, enabling SSO (Single Sign-On) and standardized profile information retrieval.

- Realizing Single Sign-On (SSO)

- Users can access all services after logging in once.

- Example: Logging into YouTube and Gmail with a Google account.

- Standardized user information retrieval

- OIDC includes user information (email address, name, etc.) in the ID token, allowing each service to handle user information in a unified way.

Examples of IdPs (Identity Providers) to Introduce

- Keycloak

- OSS and free to use.

- Highly customizable and suitable for building an authentication platform tailored to your services.

- Auth0

- Cloud-based authentication platform that is easy to introduce.

- Rich features such as social login and multi-factor authentication (MFA).

- Okta

- Authentication platform for large enterprises.

- Can meet high security requirements.

Centralized Authorization Management

By centralizing authorization, each service no longer needs to have its own authorization logic, ensuring consistency.

Representative authorization models include RBAC (Role-Based Access Control) and ABAC (Attribute-Based Access Control).

RBAC (Role-Based Access Control)

- A method of access control based on roles.

- Example

- “Admin” can edit all data.

- “General user” can only view data.

- Example

- Advantages

- Intuitive and easy to understand.

- Matches well with corporate organizational structures.

- Disadvantages

- Difficult to set fine-grained permissions (lacks flexibility).

- Hard to set custom permissions per user.

ABAC (Attribute-Based Access Control)

A method of access control based on attributes of users or resources (e.g., department, device type, access time).

Examples

- “Members of the marketing department cannot access sales data.”

- “Only between 9:00 a.m. and 6:00 p.m. can certain APIs be accessed.”

Advantages

- Enables more fine-grained access control.

- Allows dynamic policy settings.

Disadvantages

- More complex to configure than RBAC.

Using JWT (JSON Web Token)

JWT is a token format that can safely exchange authentication and authorization information with a signature.

-

Role

- Can hold “role” and “attribute” information used in RBAC/ABAC as claims.

- Each microservice can verify this information and perform appropriate access control.

-

Advantages

- Tamper-resistant (signed)

- Stateless authentication (no session management required)

- Enables consistent information sharing between services

-

How to use

- When a user logs in, they obtain JWTs (access token and ID token) from the IdP.

- Attach

Authorization: Bearer <JWT>to requests between microservices. - Each service verifies the JWT and performs access control based on RBAC or ABAC rules.

-

Implementation examples

- Use in RBAC

{ "sub": "user-123", "roles": ["admin", "editor"], // For RBAC: user roles "exp": 1724150400 }- Use in ABAC

{ "sub": "user-456", "department": "marketing", // For ABAC: department "device": "mobile", // For ABAC: device attribute "time": "2025-08-20T10:00:00Z" }

Considering Deployment Strategies for Microservices

For microservices deployment, it is important to choose an appropriate strategy considering scale, deployment frequency, and fault tolerance. This article explains in detail representative Blue-Green deployment and Canary release, as well as the use of GitOps.

Blue-Green Deployment

Blue-Green deployment is a release method that switches between two environments (Blue / Green). While the production environment (Blue) is running, you deploy the new version (Green), and if there are no problems, you switch traffic.

- Advantages

- No downtime → Release is completed simply by switching traffic.

- Easy rollback → You can immediately revert to the old version if a problem occurs.

- Testing in a consistent environment → You can test in the Green environment under production-like conditions.

- Disadvantages

- You need two environments → Increased resource cost.

- You must consider data compatibility → Be careful when changing DB schemas.

Canary Release

Canary release is a method of gradually increasing traffic to the new version. For example, you start by sending 5% of requests to the new version, and if there are no problems, you proceed step by step to 20% → 50% → 100%.

- Advantages

- Enables gradual release → Limits the impact range.

- Validates the new version with real traffic → Safely roll out while observing actual user behavior.

- Easy rollback → You can immediately revert to the old version if a problem occurs.

- Disadvantages

- Monitoring is essential → You need log collection and error rate monitoring.

- Requires traffic control → Tools such as Istio or Argo Rollouts are needed.

Using GitOps

GitOps is a method where the Git repository is the single source of truth for deployments. Using ArgoCD or Flux, you configure the system so that changes in Git are directly reflected in the Kubernetes environment.

- Advantages

- Ensures deployment consistency → Since all changes are recorded in Git, you can prevent environment drift.

- Enables automatic rollback → If a problem occurs, you can roll back simply by reverting to the previous commit.

- Improved security → Eliminates manual

kubectl applyand prevents unauthorized changes.

Transaction Management Between Services

In a microservices architecture, each service typically operates independently and has its own database. Therefore, you cannot easily guarantee ACID transactions (Atomicity, Consistency, Isolation, Durability) as with transactions on a single database. Here we explain approaches to address this problem.

Data Consistency Issues

- Transactions spanning different databases

- Example: The Order Service creates an order and the Payment Service processes the payment.

- If the Payment Service fails midway, inconsistency occurs in the Order Service (the order is confirmed even though payment is not completed).

- Distributed transaction management is complex

- Typical database transaction features (BEGIN, COMMIT, ROLLBACK) only work within a single DB.

- Traditional distributed transaction management such as Two-Phase Commit (2PC) is complex and has performance issues.

Introducing the Saga Pattern

The Saga pattern is used to ensure data consistency in distributed systems. It avoids 2PC and ensures data consistency through a combination of local transactions.

Two types of Sagas

-

Choreography (event-driven)

- Each service listens to events from other services and autonomously executes the next process.

- Advantages:

- For simple flows, management cost is low.

- Disadvantages:

- Services become tightly coupled, making changes difficult (risk of cascading changes).

- Use cases: Small services, simple workflows.

-

Orchestration (managed)

- A central Saga Coordinator (orchestrator) controls processing for each service.

- Advantages:

- Workflow is easier to visualize.

- Easier to localize the impact of changes.

- Disadvantages:

- The coordinator can become a bottleneck.

- Use cases: Complex workflows, large-scale services.

Sample code for the Saga pattern (Orchestration)

The following code shows a Saga orchestrator managing order creation → payment completion → inventory reservation.

import { Kafka } from "kafkajs";

const kafka = new Kafka({ clientId: "saga-coordinator", brokers: ["localhost:9092"] });

const producer = kafka.producer();

const consumer = kafka.consumer({ groupId: "order-group" });

async function startSaga() {

await consumer.connect();

await producer.connect();

consumer.subscribe({ topic: "order_created", fromBeginning: true });

consumer.run({

eachMessage: async ({ topic, message }) => {

const { orderId, userId, amount } = JSON.parse(message.value.toString());

try {

// Step 1: Payment processing

await producer.send({ topic: "payment_request", messages: [{ value: JSON.stringify({ orderId, userId, amount }) }] });

// Step 2: Inventory reservation

await producer.send({ topic: "inventory_reserve", messages: [{ value: JSON.stringify({ orderId }) }] });

// Step 3: Order confirmation

await producer.send({ topic: "order_confirmed", messages: [{ value: JSON.stringify({ orderId }) }] });

} catch (error) {

console.error("Saga transaction failed, initiating rollback", error);

// Step 4: Rollback (compensating transaction)

await producer.send({ topic: "order_rollback", messages: [{ value: JSON.stringify({ orderId }) }] });

}

},

});

}

startSaga();

Standardizing and Maintaining API Documentation

Standardizing API documentation is important for smooth communication within and outside the development team and for improving maintainability and extensibility. This article explains in detail the challenges of API documentation and their solutions, and also introduces implementation samples.

Challenges

- API specifications are not unified

- If each team designs APIs in different formats, client implementation becomes complicated and can cause misunderstandings and bugs.

- Ambiguous version control

- The impact range of API changes becomes unclear and may cause issues for existing consumers.

Automatically Generating API Documentation Using OpenAPI (Swagger)

By using OpenAPI (formerly Swagger), you can centrally manage API specifications and keep them up to date.

- Steps

- Define the OpenAPI specification (openapi.yaml).

- Use Swagger UI or Redoc to automatically generate documentation.

- Integrate into CI/CD to automatically keep documentation up to date.

openapi: 3.0.0

info:

title: Sample API

version: 1.0.0

paths:

/users:

get:

summary: Get list of users

description: Retrieve all registered users

responses:

'200':

description: Success

content:

application/json:

schema:

type: array

items:

type: object

properties:

id:

type: integer

name:

type: string

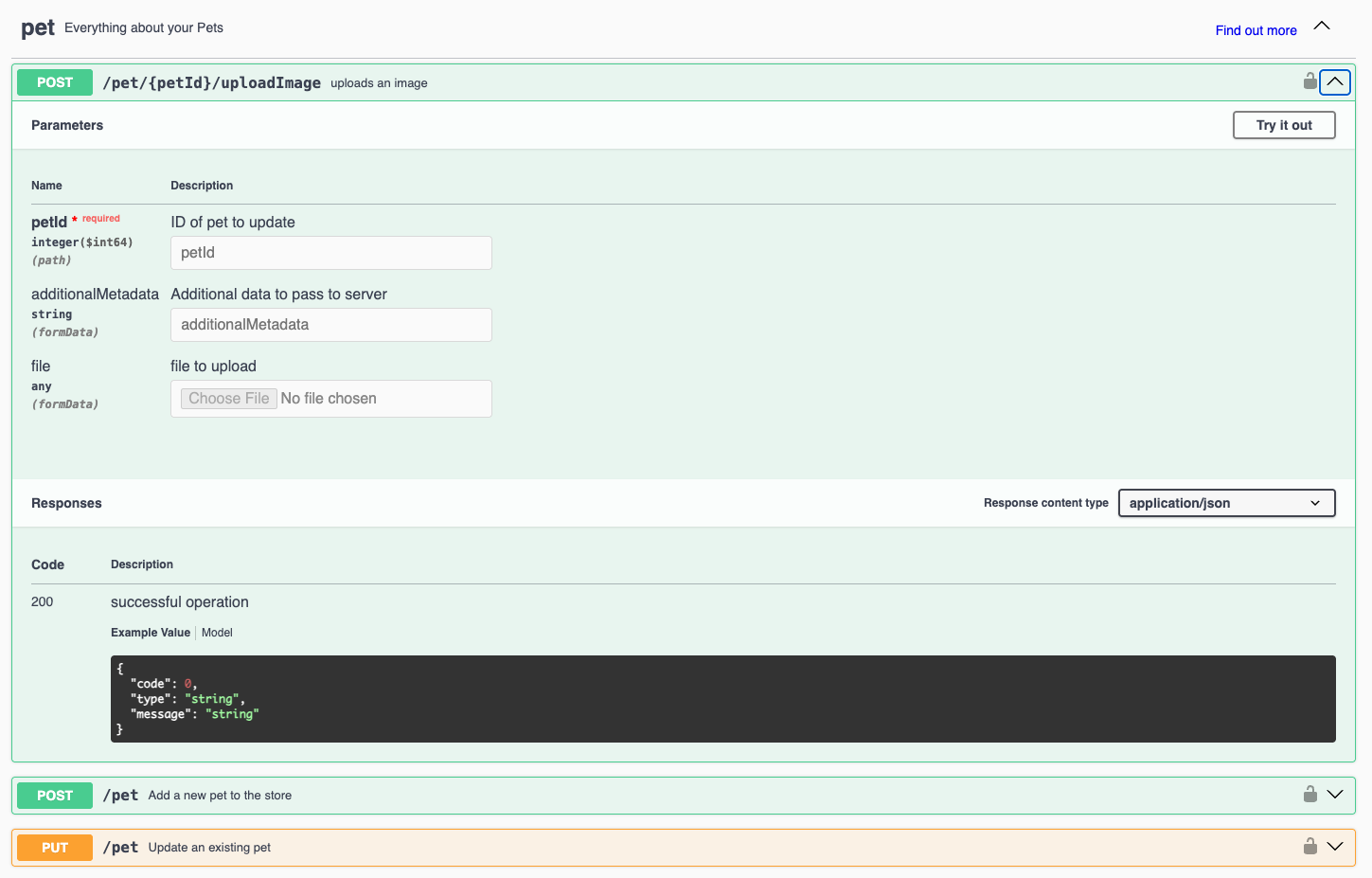

Image of Swagger UI

Reference:

Defining an API Versioning Strategy

If API versioning is not appropriate, you cannot minimize the impact of changes and may break client compatibility. By adopting the following strategies, you can manage versions smoothly.

| Method | Advantages | Disadvantages |

|---|---|---|

| URL-based (/v1/users) | Simple and easy to understand | URLs change, so clients must be updated |

| Header-based (Accept: application/vnd.myapi.v1+json) | Endpoints do not change | Clients must properly manage headers |

| Request parameter (?version=1) | Flexible version specification | Requires proper management from a security perspective |

Sample version management

import express from "express";

const app = express();

app.get("/v1/users", (req, res) => {

res.json({ message: "This is API v1" });

});

app.get("/v2/users", (req, res) => {

res.json({ message: "This is API v2 with additional fields" });

});

app.listen(3000, () => console.log("API Server running on port 3000"));

/v1/usersand/v2/usersreturn different responses, making version management easier.

Conclusion

To succeed with a microservices architecture, it is not enough to simply split services; proper technology selection and integration strategies are essential. This article introduced concrete approaches using technologies such as BFF, API Gateway, gRPC, Kafka, RabbitMQ, Keycloak, and Swagger.

The technologies you introduce will vary depending on your project requirements, but by designing with scalability, security, and maintainability in mind, you can build a sustainable system.

If you are about to introduce microservices, select technologies that match your own requirements and design an appropriate architecture. We hope this article will be of some help.

Questions about this article 📝

If you have any questions or feedback about the content, please feel free to contact us.

Go to inquiry formRelated Articles

React + Express Monorepo Environment Setup Guide: Achieving Efficient Development with Turborepo

2025/02/05Building a Mock Server for Frontend Development: A Practical Guide Using @graphql-tools/mock and Faker

2024/12/30Getting Started with GraphQL Using Apollo Server: Express & MongoDB Integration Guide

2024/12/27End-to-end testing for Express + MongoDB apps using Supertest and Jest

2024/12/20